The world is full of data and information — lots and lots of information, too much for humans to sort through on their own.

To avoid information overload, computer scientists have created algorithms and systems to help humans sort through all of that data. The algorithms recommend products and articles you might like, or tell you what information other people already searched for.

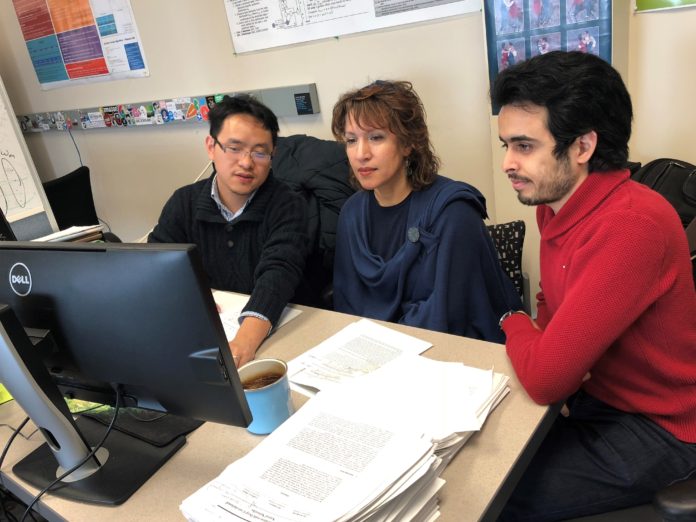

“The world is simply drowning in information,” said Dr. Olfa Nasraoui, a professor of computer engineering and computer science at the University of Louisville. “Our brains couldn’t handle the volumes of information and number of options, especially on social media, so these systems emerged out of necessity — not luxury.”

But while these algorithms may make our lives easier, there’s a problem: they can become biased.

Nasraoui, director of the UofL Knowledge Discovery and Web Mining Lab at the J.B. Speed School of Engineering, is studying this bias— and, possibly, how to fix it. Her research is backed by the National Science Foundation.

First, it’s important to note that these algorithms don’t necessarily start out biased. But, through machine learning — where computers analyze data examples to “learn” how to make decisions — they learn what humans like and don’t like and show us that.

“It’s possible that you perpetuate your biases as a human being because the algorithm can act as a filter,” she said. “It will filter the world for you.”

This, she said, creates “filter bubbles,” where we only see information that reinforces or strengthens our preexisting beliefs. This can increase polarization and division.

The current algorithms for filtering or sorting information for us typically fall into one of two categories: search engines and recommender systems.

Search engines allow you to sift through the available information to find what you want after you submit a search query. Recommender systems on the other hand, which are used increasingly such as on online shopping sites and on apps, learn about us over time and recommend information they think we might like.

As the algorithms analyze our data and look for trends, they can become biased in several ways.

For example, they might notice that people of a certain age group or who live in a certain area like or dislike certain things. This is sample set bias, and it’s why the recommendations in a hip urban neighborhood might look different from those you get in a small town.

Another type is iterative bias, which is common in online shopping platforms. If you search for the same kinds of things over and over, the algorithm will begin to make assumptions about what you like and start limiting what you can see by filtering information results.

Aside from just researching this bias, Nasraoui and her team of UofL students, ranging from undergraduates to doctoral candidates, are trying to prevent it.

Say, by creating an algorithm that can “tune” results according to the user’s wishes for exploring outside the filter bubble or finding ways to make the algorithms more transparent.

“Hopefully, as more people become aware of Big Data algorithmic biases, these algorithms will evolve for the better,” she said. “We must be aware.”